Hello Everyone and Happy Tuesday!

I’ve promised to write a full-blown article dedicated on troubleshooting Provisioning Services retries, but while that’s in the works I’ll share with you all a solution to an issue that I came across in a recent implementation of XenDesktop/PVS with VMware ESXi on Cisco UCS hardware. I’m sure most of you that work with PVS on a daily basis have seen at least some retries in your environment. While a certain amount (0-100) can be deemed acceptable, anything that’s above that count is a cause of concern. As a quick refresher, let’s remind ourselves of what PVS retries are and why they occur.

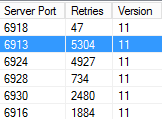

Retries in PVS are a mechanism to track packet drops in the streaming traffic between a Provisioning Server and a target device. Because that traffic is based on the not-so-reliable (however optimized by Citrix) UDP protocol, it’s very important that we don’t put configurations in place that would strangle that traffic to death (surely you don’t want your users complaining about application slowness and session latency). So if one day you look at the Show Usage tab of your vDisk in the PVS Console and you realize you have hundreds or thousands of retries generated on some or most of your targets, you know that something wrong is going on in your environment and it has to be addressed immediately:

Of course, starting at the physical side and working your way up to the virtual layer is a good approach even though a lot of times the opposite occurs because your network or storage teams will want hard evidence that it’s not your system that’s at fault until they get involved. I recommend involving them from the very beginning and while you are looking at your PVS configuration, they can start investigating routers, switches, cables, storage arrays, and other equipment (it could be something as simple as a malfunctioning switch port or outdated firmware or even a misconfiguration on the VMware vSwitch and the NIC teaming settings). In this particular case, though, everything was configured correctly both on the Citrix side (2 PVS servers on Windows 2012 R2 and 100 Windows 7 SP1 VDA targets with Citrix best practices in place across the board) and on vSphere (6 ESXi hosts in a cluster with Standard vSwitches and virtual adapters dedicated to the PVS traffic). We even checked the firmware in UCS which was slightly out-of-date but updating it didn’t help either.

So what ended up being the issue? QoS! Cisco UCS has Fabric Interconnects (FIs) that provide connectivity for blade/rack servers within your chassis. Just like regular switches, FIs have Quality-of-Service capability that prioritizes traffic based on system classes as shown in the following picture:

So what if the VNIC that carries out the PVS traffic has a drop-eligible, low-priority, or best-effort weight assigned to it? Yes, traffic will certainly get dropped! As a result, you will see retries generated in the PVS Console and session latency is likely to occur on the target devices. The best thing you can do in this case is to DISABLE QoS for the PVS VNIC in the UCS Manager and reboot all your PVS target VMs. Arguably, you could be fine by just assigning that VNIC a higher priority in the QoS stack but I personally haven’t tested that option and recommend disabling it even if I have to take a bit of heat from the UCS Gurus 🙂

As always, any questions or feedback are welcome in the comments section. I hope this helps those of you who are experiencing this issue or just want to be proactive about it!